The AI Pilot in Command: Using Crew Resource Management to Orchestrate Your AI Agents

The 4-Step Framework for Maintaining Control of Your AI Crew

You Are No Longer Just a Builder, You Are a Pilot

I have often touted that aviation principles can be applied to AI systems. With the rise of AI agents, I couldn’t help but think about this overlap. More and more agents are being built and added to workflows, but how are we managing these systems? How do we ensure that we are maintaining operational control while managing a non-human task force? That’s where CRM comes in.

Crew Resource Management (CRM) is defined as the “application of human factors knowledge and skills to ensure that teams make effective use of all resources.” It was originally developed to reduce human error in aviation by optimizing the use of all available resources. Human, hardware, and information.

The theory behind this article, is to prove that you can use CRM to orchestrate your AI agents. While AI agents are not human, applying human factors knowledge is essential because the AI must interact safely and effectively with human operators.

Human factors engineering is the applied science of optimizing how people work together with machines. Over the past seventy years, this field has continuously evolved to address new technologies, shifting from physical ergonomics (like the layout of cockpit controls) to “cognitive ergonomics” as systems became highly computerized and automated. Integrating AI is considered the next major step in the evolution of human factors.

For a solo builder orchestrating multiple AI agents, you are no longer just executing tasks. You are the “Pilot in Command.” Your role has shifted from manual control to orchestrating a highly capable, non-human crew.

The Hidden Traps of an AI Crew

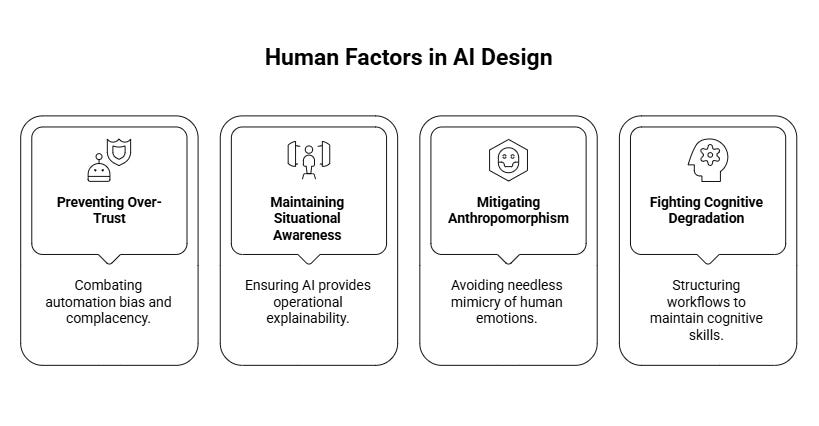

Even though the AI itself lacks human qualities, human factors frameworks are required to manage the human side of the human-AI partnership. Specifically, this knowledge is applied to solve several core challenges in managing AI agents:

Preventing Over-Trust and Complacency: Combating “automation bias” (over-trusting the machine) and complacency, where humans might “look but not see” contradictory evidence because they passively assume the AI is correct.

Maintaining Situational Awareness: Because AI algorithms can act as “black boxes,” they risk confusing users or undermining their understanding of a dynamic situation. Human factors design ensures that AI agents provide "operational explainability," meaning the AI communicates its reasoning and confidence levels in a way that humans can quickly comprehend, keeping both the human and the AI on the same page.

Mitigating the Risks of Anthropomorphism: Human factors experts warn against designing AI to needlessly mimic human emotions or deceive users into thinking they are dealing with a person. Falsely personifying an AI increases the danger that human operators will inappropriately surrender their authority, second-guess their own judgment, or delegate their safety responsibilities to the machine.

Fighting Cognitive Degradation: Human factors research highlights that the more reliable a system is, the less practiced the human operator becomes, leaving them unable to safely intervene when the system eventually fails. This knowledge is critical for structuring workflows that force humans to maintain their own cognitive skills when managing AI.

The Human-AI Integration Framework (HAIF) is a protocol-based, scalable operational system designed to manage hybrid teams where human professionals and AI agents collaborate.

Remember, the formal definition of CRM emphasizes the effective use of all available resources. This explicitly includes non-human systems like hardware and information.

Therefore, using human factors knowledge is not about treating the AI like a person, but about purposefully designing the AI’s behavior, communication, and autonomy to perfectly match human cognitive needs, capabilities, and limitations.

Step 1: Structuring Your Crew (Tiered Autonomy)

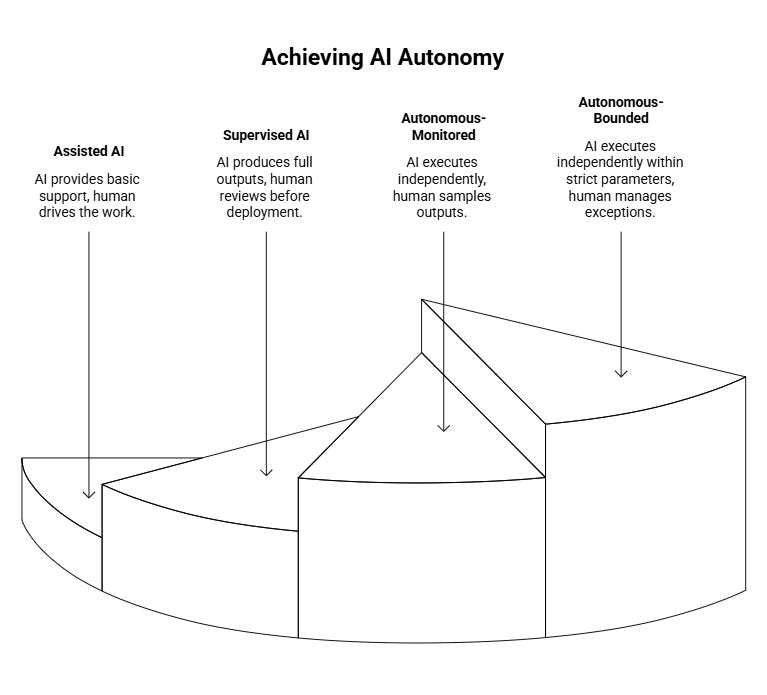

At its core, effective CRM means using all available resources and delegating tasks appropriately. Delegating tasks to an AI should be treated as a formal, visible operational decision. This delegation must be highly reversible (demotable) without friction or stigma if the AI begins to hallucinate or fail. You need to structure your agents based on their reliability and the task’s risk.

HAIF requires teams to systemically assess every candidate task based on four factors: structuredness, verifiability, consequence of error, and the AI’s demonstrated capability. Based on this assessment, the task is assigned to one of four autonomy tiers (or it is marked as “AI-restricted” if human-only judgment is required):

Tier 1 (Assisted): The AI simply supports you (e.g., standard code autocomplete). You drive the work.

Tier 2 (Supervised): The AI agent produces full outputs (e.g., an agent drafting a blog post or writing a script), but you mandate a 100% human review before it is deployed.

Tier 3 (Autonomous-Monitored): The AI executes independently, and you only sample the outputs or manage exceptions.

Tier 4 (Autonomous-Bounded): The AI executes independently within strict parameters. You only manage exceptions and conduct periodic audits.

Promoting an AI to a higher tier is a slow, evidence-based process requiring multiple successful cycles. If an agent starts hallucinating or failing, immediately “demote” it to a lower autonomy tier without hesitation.

Step 2: Standard Operating Procedures (SOPs) for Agents

In aviation, crews use checklists. For AI builders, these become “working agreements,” or pre-defined system prompts and procedures that establish exactly what the agent’s goals and boundaries are.

Effective CRM relies on the crew having a common understanding of the environment and the task. You must give your agents deep context so their “mental model” of the project aligns with yours. You are building shared mental models that align with your goals.

Program your agents to push back or ask clarifying questions. An effective AI teammate should mimic the CRM skill of waiting for the pilot’s acknowledgment before proceeding with destructive or complex actions.

This can be done by building them directly into the agent’s core instructions beforehand. For example, I have a Claude project for this blog that I would place into the Tier 1 category. The project is there to provide assistance with outlining and structuring my articles. Here is a sample taken from my project instructions:

How to work with the author:

Ask Socratic questions rather than providing direct answers. Surface connections and sources for Rich to explore independently. Never write blog content unless explicitly asked. End research sessions by asking Rich to summarize three things he learned.These instructions ensure that I am always driving the work and that my “assistant” is there to question my thinking and push back when something isn’t clear.

Step 3: Quality Assurance and Human Ownership

Establish a strict rule for yourself: no matter how autonomous your agent chain is, you are the final accountable owner of the output. AI cannot hold accountability.

Stop estimating your work based purely on how fast the AI generates it. Budget dedicated time specifically for validation. Create strict personal checklists to verify accuracy and coherence for everything your agents build.

Another example of an agent that I use is my research agent that lives within Claude Cowork. This entire system was taken from Wyndo at The AI Maker. Instead of describing how it works here, I will encourage you to read it from the man himself.

This somewhere in between Tier 2 and Tier 3 as it runs autonomously. I then review and validate the output by checking the sources and diving into the research myself. The agent is doing the hard work (researching the topic and finding relevant articles), allowing me to focus on breaking down each piece of research and applying it to what I’m working on.

Step 4: Active Competence Maintenance

It is important to remember that while productivity and efficiency can increase with AI delegation, you must not lose your edge. To fight the “Dependency Trap,” implement a core HAIF principle: Active Competence Maintenance.

Schedule periodic “human-only” execution cycles where you build, code, or write entirely without AI assistance. This is not a punishment, but a vital calibration exercise to ensure you retain the expertise required to effectively supervise and validate your AI agents. This ensures that professionals maintain the baseline expertise required to effectively supervise and evaluate the AI over time.

For me, this could mean reading physical books as part of my research instead of outsourcing everything to my agent. For example, I read Skin in the Game by Nassim Nicholas Taleb as part of my research on my last post about AI accountability and ownership.

You can read about it here:

Mastering Human-Autonomy Teaming

While my use of AI agents is rudimentary compared to other AI adopters, using CRM for AI agents can be applied at any level. I can keep a tight leash on my agents as I only have a few. As users build and grow their AI workforce, it becomes clear why effective CRM can mean the difference between an optimized, safe system vs. one that is uncontained.

By maintaining strict validation protocols, clear communication loops, and tiered autonomy, you can scale your output with AI agents without sacrificing quality or your own foundational skills.

Adopting a CRM framework transforms you from an overwhelmed individual user into a highly resilient team leader so try it out and stay in control of your non-human companions.